In the ever-evolving landscape of data management, Change Data Capture (CDC) and Apache Kafka form a powerful alliance, reshaping how organizations handle real-time data flow. CDC Kafka integration brings forth a dynamic solution for capturing and propagating changes seamlessly across systems.

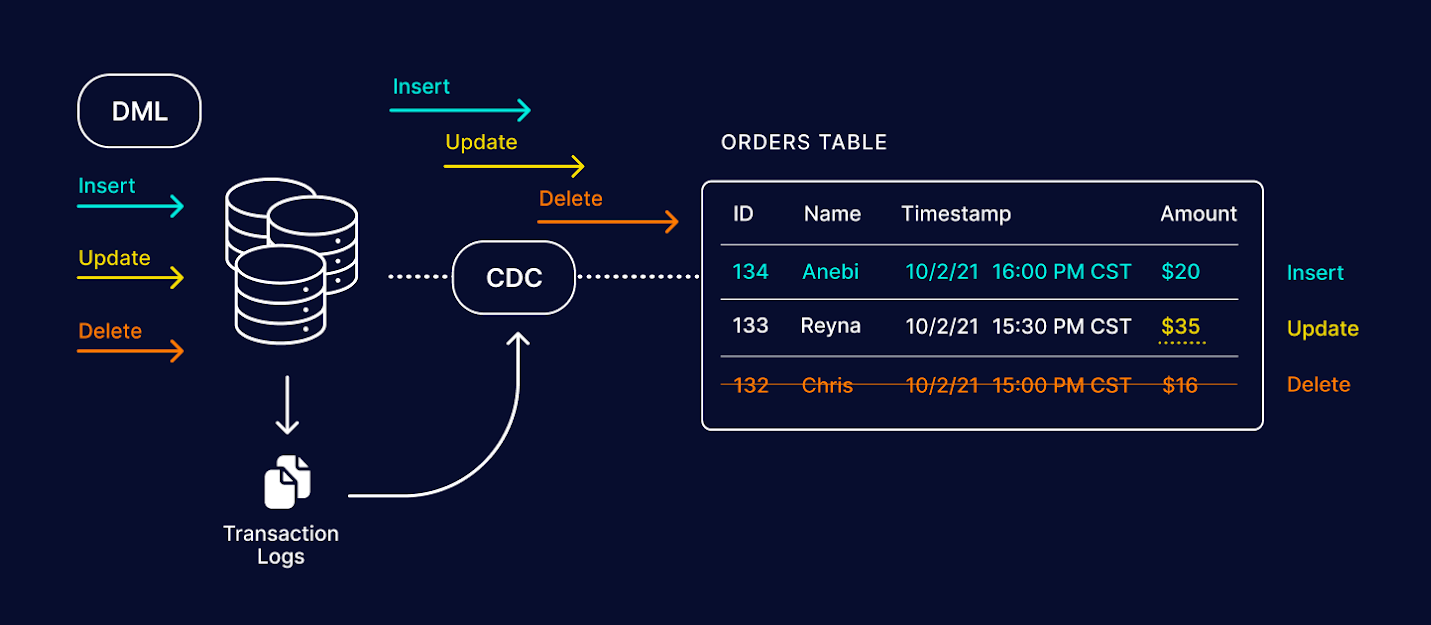

Capturing Changes with CDC: Change Data Capture is the linchpin in this integration, serving as the mechanism to identify and capture alterations in databases. It tracks changes at a granular level, ensuring efficiency and minimal impact on performance.

Kafka: The Streaming Platform: On the other side of the spectrum, Apache Kafka acts as the robust streaming platform that facilitates the smooth, fault-tolerant, and scalable transfer of captured changes. Kafka's distributed architecture ensures reliability and guarantees the delivery of messages in the intended order.

Synergies in Action: The amalgamation of CDC and Kafka results in a harmonious data flow. As changes occur in the source database, CDC captures these modifications and, in real-time, Kafka transports these changes to target systems. This synergy ensures that downstream applications, databases, or analytics platforms receive the most recent data promptly.

Benefits of CDC Kafka Integration

Real-Time Insights: Organizations gain immediate access to the latest data, empowering timely decision-making.

Scalability: Kafka's scalable architecture accommodates growing data volumes, ensuring the system's adaptability.

Fault Tolerance: Kafka's resilience ensures that even in the face of failures, data delivery remains intact.

Consistency Across Systems: Changes in the source system are efficiently propagated to all connected systems, fostering data consistency.

Implementation Best Practices

Configuring CDC: Thorough configuration of CDC parameters is essential, ensuring it captures relevant changes without overwhelming the system.

Topic Partitioning in Kafka: Proper topic partitioning in Kafka optimizes data distribution, enhancing scalability and parallelism.

Monitoring and Maintenance: Regular monitoring of the CDC Kafka pipeline is crucial for identifying issues promptly and ensuring smooth operations.

Challenges and Considerations

Latency: Despite being real-time, there might be slight latency in the delivery of data, depending on the complexity of the data flow.

Data Schema Evolution: Changes in data schemas must be managed meticulously to prevent conflicts and disruptions in the pipeline.