Modern applications often rely on AI models that evolve over time, which means the deployment pipeline has become just as important to secure as the model itself. Engineering teams must handle data ingestion, model training, validation, packaging, and real time updates across multiple environments. Any weakness in this chain can give attackers room to insert malicious code, poison data, alter configurations, or influence model behavior in harmful ways.

Understanding how engineers can secure model deployment pipelines effectively requires looking beyond traditional software practices. AI brings new risks tied to data quality, version control, inference behavior, and automated workflows. A strong security approach must protect every stage from development to production.

Why Model Deployment Pipelines Need Special Protection

Models Rely on Constantly Changing Data

Traditional applications do not change unless a developer updates the code. AI models, however, adapt as their underlying data changes. This creates an environment where shifts in data quality or hidden manipulation can compromise the entire system if the pipeline is not secure.

Pipelines Often Involve Multiple Automated Steps

From preprocessing to training to deployment, most AI teams rely on automation. While automation improves speed, it also increases the risk that a mistake or malicious input can propagate to production without being noticed.

Multiple Teams Interact With the Pipeline

Unlike classic software workflows, model pipelines involve data scientists, ML engineers, DevOps teams, and security teams. Without clear controls, this collaborative environment makes it easier for misconfigurations or unauthorized changes to slip through.

Key Risks Inside Model Deployment Pipelines

Data Poisoning and Manipulated Inputs

If attackers influence the data used for training or fine tuning, they can intentionally weaken the model or cause it to behave incorrectly. Engineers must ensure that all data entering the pipeline is validated, trusted, and monitored.

Vulnerable Dependencies and Model Packaging

AI workloads rely on specialized libraries, hardware drivers, and packaging formats. If these components are outdated or tampered with, attackers can introduce harmful code into the deployment pipeline.

Unauthorized Model Changes

A deployment pipeline is only secure if the right people have the right permissions. Without strict access control, attackers or even internal users could push unapproved models into production.

Lack of Visibility Into Model Behavior

Once a model is deployed, teams often lack the observability needed to detect when its behavior changes unexpectedly. Without monitoring, it is difficult to know when something in the pipeline has gone wrong.

How Engineers Can Secure Model Deployment Pipelines Effectively

Validate and Protect Data at Every Stage

Data must be checked before it enters any part of the pipeline. Engineers should verify data sources, use strong access policies, and flag anomalies that indicate tampering. Maintaining a clear data lineage helps teams trace issues back to the original source.

Use Trusted Packaging and Dependency Controls

Model artifacts should be packaged consistently with signed containers, verified dependencies, and strict version control. This prevents unauthorized libraries or harmful code from entering the pipeline.

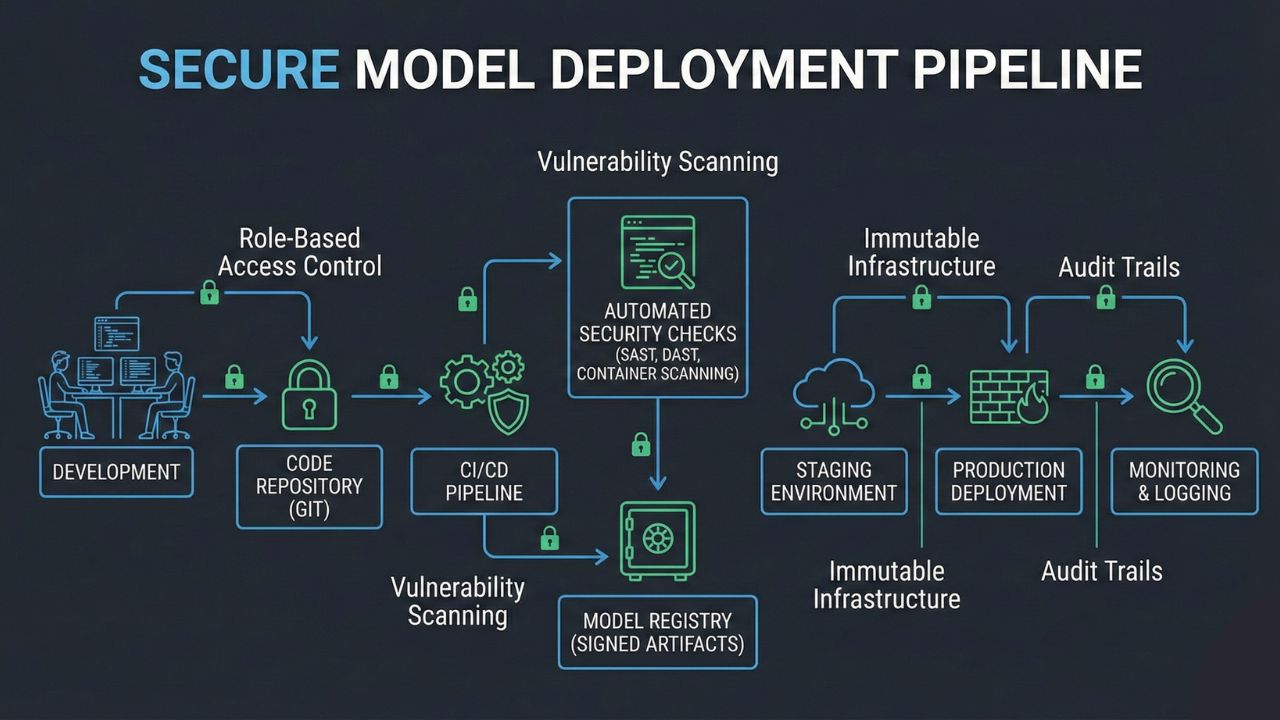

Enforce Role Based Access and Approval Checks

Every model update should go through a controlled approval process. Teams must define who can push updates, who can approve them, and who can access sensitive environments. Logging and audit trails ensure changes can be reviewed later.

Automate Security Checks Within the Pipeline

Security should be part of the deployment process, not an afterthought. Automated validation steps can scan artifacts, verify configurations, and run tests to ensure the model meets safety requirements before it reaches production.

Monitor Models After Deployment

Even a well tested model can drift or behave differently once exposed to real users. Continuous monitoring helps teams detect unexpected responses, degraded performance, or signs of misuse. Early detection allows engineers to act before issues escalate.

Test Pipelines With Realistic Adversarial Scenarios

Red team exercises and adversarial testing help uncover pipeline weaknesses that normal testing does not reveal. These simulations expose where data can be manipulated, where access controls are weak, and where automation lacks proper safeguards.

Build Skills That Support Secure Deployment Practices

Engineers working with AI systems need practical training to strengthen how they design, test, and secure model pipelines. The AI security certification from Modern Security offers hands on lessons that help teams protect every stage of the model lifecycle.

Conclusion

Securing model deployment pipelines requires a deeper understanding of the risks associated with data, automation, and evolving model behavior. As AI systems become central to business operations, engineers must adopt methods that protect data integrity, enforce strong controls, and monitor models continuously after release.

A secure AI deployment pipeline reduces the chance of hidden vulnerabilities, limits the impact of attempted attacks, and increases confidence in the reliability of deployed models. With thoughtful planning and strong collaboration across teams, organizations can build pipelines that are both efficient and resilient.