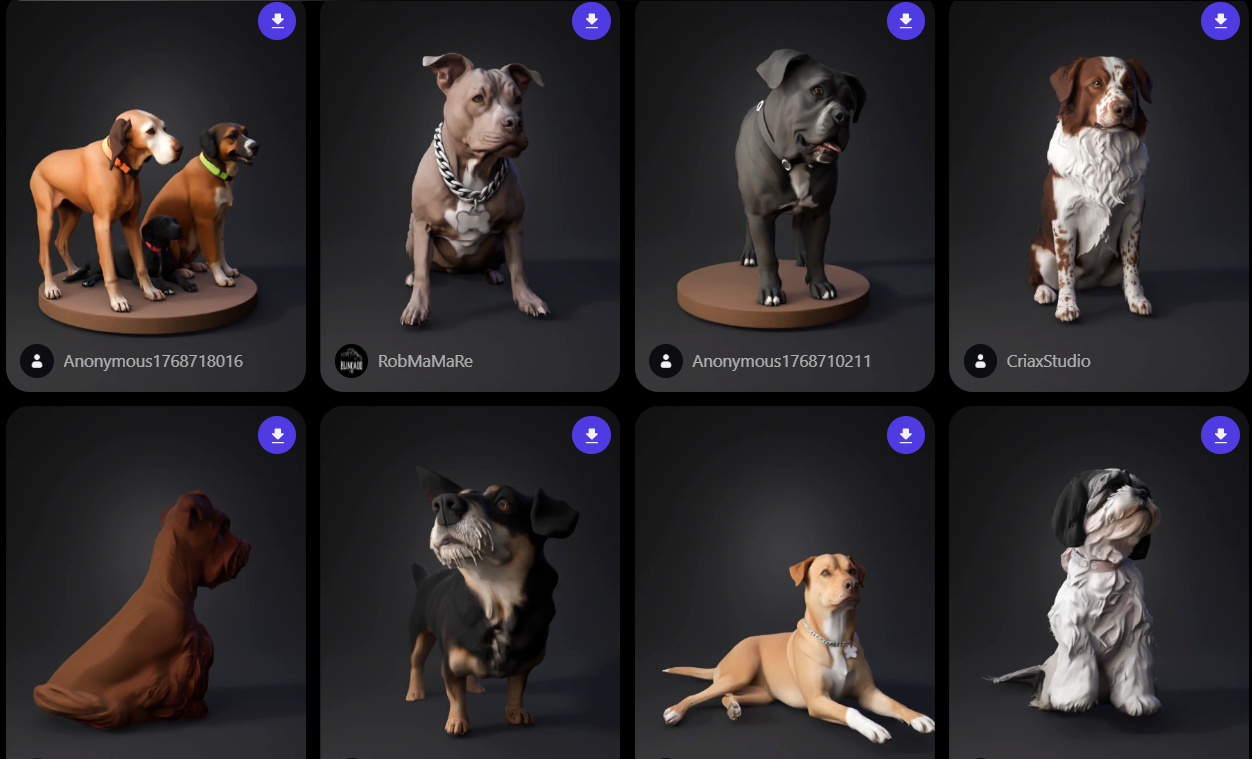

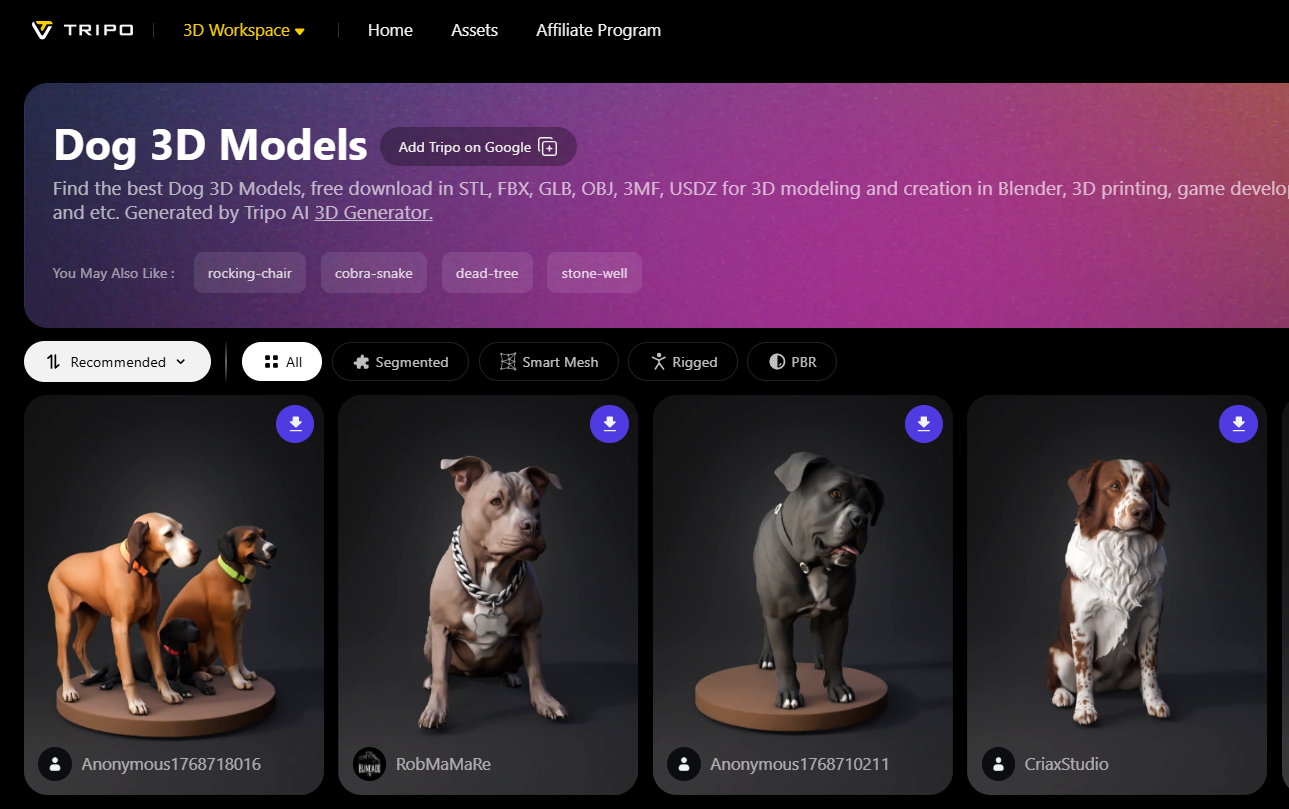

The landscape of immersive technology has reached a pivotal point where the demand for visual fidelity is no longer just a luxury but a fundamental requirement for user engagement. In 2026, as spatial computing becomes a standard part of our digital lives, the role of high-poly dog 3D models in VR and AR has shifted from simple background decoration to complex, interactive companions. For developers, the challenge lies in sourcing assets that maintain high vertex counts for extreme detail while remaining optimized for real-time rendering on standalone headsets. Exploring a modern dog 3D model gallery reveals a new generation of assets that utilize advanced topology, allowing for smooth surfaces and realistic light interaction that were once reserved for pre-rendered cinema.

The Shift Toward Spatial Computing and Hyper-Realism

The emergence of sophisticated mixed-reality hardware in 2026 has fundamentally changed how we perceive digital animals. When a user can walk around a virtual dog in their actual living room, the "uncanny valley" becomes much more apparent if the model lacks geometric density. High-poly models are now the gold standard for these experiences because they allow for physical proximity; you can lean in to see the individual strands of a Golden Retriever's fur or the wet glisten on a Labrador's nose without seeing jagged edges. This level of hyper-realism is essential for applications ranging from virtual pet simulators to high-end architectural visualizations where a realistic canine adds a sense of "home" and lived-in warmth to a digital space.

Optimizing High-Poly Meshes for Standalone VR Headsets

While high-poly counts are necessary for detail, 2026 has brought about massive improvements in how VR engines handle these heavy assets. Developers are now utilizing sophisticated Level of Detail (LOD) systems and occlusion culling to ensure that a 100,000-polygon dog model doesn't tank the frame rate. Modern high-poly models often come with pre-baked normal maps and advanced shaders that simulate subsurface scattering, making the skin and fur look organic under the varying light conditions of an AR environment. By selecting assets from a professional dog 3D model gallery, creators can find models that are specifically "spatial-ready," meaning they feature clean edge loops that deform perfectly during complex animations without the texture stretching common in lower-quality meshes. For more visit here https://studio.tripo3d.ai/3d-model-gallery/dog

Interactive AI-Driven Behaviors in High-Fidelity Assets

In 2026, a top-tier dog model is defined by more than just its appearance; it is defined by its capacity for interaction. The best high-poly models now come integrated with AI-driven behavioral layers that allow the digital dog to react to the user’s voice, gestures, and even emotional tone. For instance, a high-poly Border Collie in a VR training simulation can now "sense" a user’s proximity and adjust its posture accordingly. This synergy between high-end geometry and artificial intelligence creates a sense of "presence" that is vital for the success of modern immersive experiences. These models are no longer static statues but are living components of a broader digital ecosystem that learns and evolves alongside the player.

The Importance of Sub-Surface Scattering in Canine Eyes

One of the most difficult features to replicate in virtual reality is the depth and life of a dog's eyes. In 2026, high-poly models achieve this through multi-layered eye geometry, including a separate cornea and iris mesh. This allows for realistic refraction and "specular" highlights that shift naturally as the user moves their head. For breeds like the Siberian Husky, which often feature piercing blue eyes, the high-poly approach ensures that the intricate patterns of the iris are visible even in close-up AR views. When a digital animal makes eye contact with a user in a VR space, the biological accuracy of that gaze is what creates the emotional bond necessary for truly immersive storytelling.

Realistic Fur Grooming and Physics for AR Integration

Fur has long been the "final frontier" for real-time animal models, but 2026 has seen the widespread adoption of "strand-based" hair systems in VR and AR. Unlike older methods that used flat planes or "shells," high-poly models now support thousands of individual hair strands that react to virtual wind and physical touch. This is particularly impressive in AR, where a dog’s fur can appear to be ruffled by a real-world fan or cast accurate shadows onto a user’s actual floor. The technical complexity of these systems requires a robust base mesh that can handle the "grooming" data, ensuring that the fur follows the contours of the dog’s muscles as it runs or jumps through a virtual obstacle course.

Advanced Rigging for Dynamic Muscle Deformation

A high-poly model is only as good as its rig, and in 2026, "muscle-based" rigging has become the industry standard for premium assets. Instead of simply rotating joints, these advanced rigs simulate the sliding of skin over bone and the contraction of muscle groups. For a sleek breed like the Doberman or Greyhoud, this means that as the dog sprints in a VR game, the user can see the ripple of the shoulders and the tension in the hindquarters. This level of anatomical accuracy is what separates a "game-like" asset from a truly professional VR experience, providing the weight and momentum that the human brain expects to see in a living creature.

Future-Proofing Projects with Generative 3D Workflows

As we move further into 2026, the speed of asset creation is being revolutionized by generative AI platforms that can produce high-poly meshes from simple text or image prompts. These tools allow developers to iterate on breed variations or unique "fantasy" dogs in seconds, rather than weeks. By utilizing these emerging workflows, studios can populate vast virtual worlds with hundreds of unique, high-fidelity animals, each with distinct markings and physical traits. This democratizes the creation of high-end VR content, allowing smaller teams to compete with major studios in terms of visual quality and world-building depth, ensuring that the virtual landscapes of tomorrow are as diverse and detailed as the real world.