Implementing Deep learning (DL) can feel like trying to build a plane while flying it, but the process is actually quite structured. Whether you're building a recommendation engine or a predictive tool, the workflow generally follows a standard lifecycle.

Here is a roadmap to get your project from an idea to a working model.

1. Define the Problem

Before touching any code, identify what you are trying to solve. ML isn't always the answer—sometimes a simple "if-else" statement is better.

Supervised Learning: Predicting a specific value (e.g., house prices or spam vs. not spam).

Unsupervised Learning: Finding hidden patterns (e.g., grouping customers by behavior).

Reinforcement Learning: Learning through trial and error (e.g., teaching an AI to play a game).

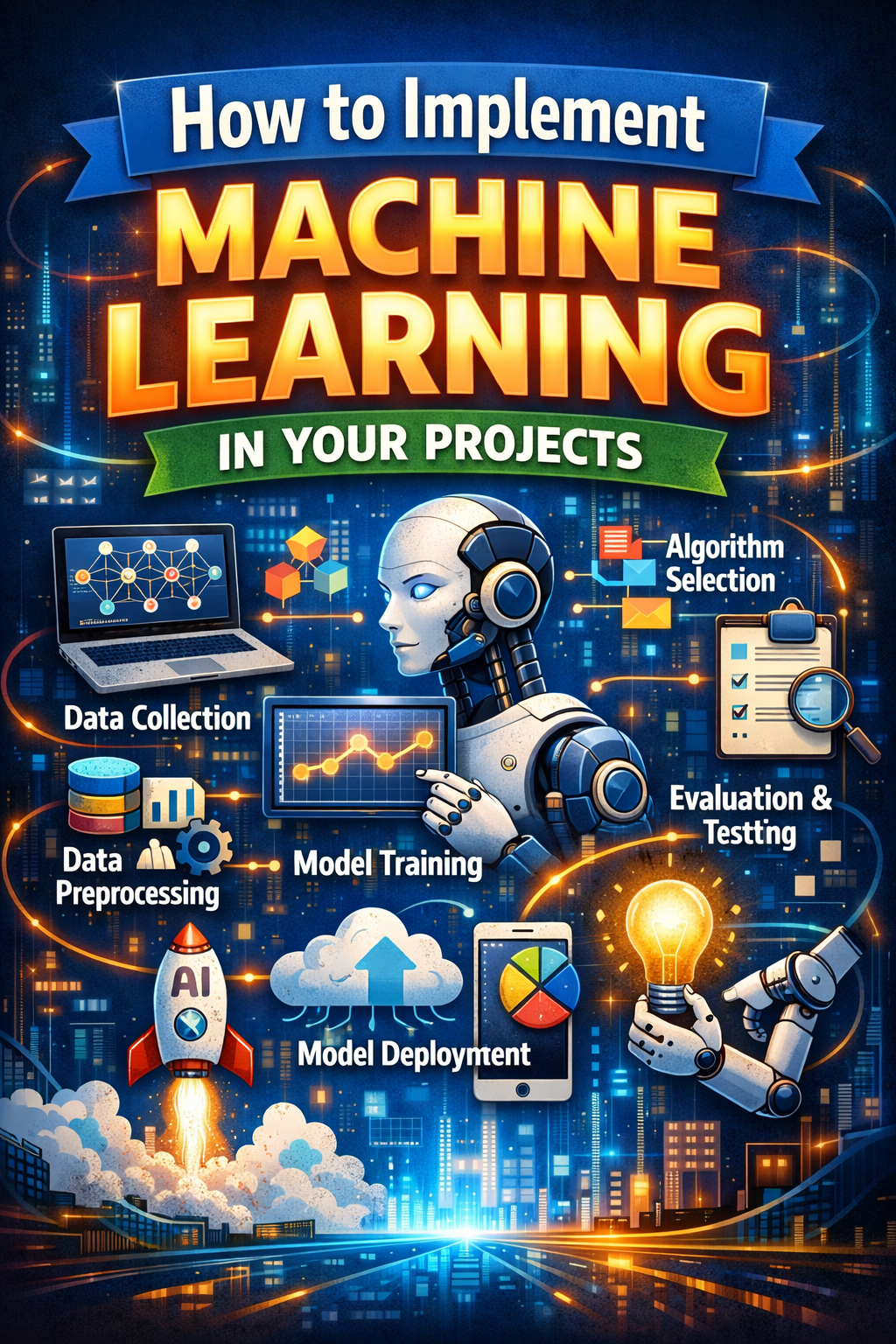

2. The Machine Learning Workflow

The process is iterative. You will likely go back and forth between these stages several times.

Phase 1: Data Acquisition & Cleaning

Your model is only as good as the data you feed it ("Garbage In, Garbage Out").

Collection: Gather data from APIs, SQL databases, or CSV files.

Cleaning: Handle missing values, remove duplicates, and fix outliers.

Feature Engineering: This is the "secret sauce." It involves selecting the most relevant variables or creating new ones (e.g., converting a "Timestamp" into "Day of the Week").

Phase 2: Modeling

This is where the "learning" happens.

Split the Data: Divide your dataset into a Training Set (usually 80%) to teach the model and a Test Set (20%) to see how it performs on data it hasn't seen before.

Select an Algorithm:

Linear Regression: For predicting continuous numbers.

Random Forest: Great for classification and handling complex data.

Neural Networks: Best for images, audio, or very large datasets.

Training: Run your data through the algorithm. Mathematically, the goal is to minimize a Loss Function, often represented as:

$$J(\theta) = \frac{1}{2m} \sum_{i=1}^{m} (h_\theta(x^{(i)}) - y^{(i)})^2$$

Phase 3: Evaluation

How do you know if it works? You use metrics based on your goal:

Accuracy: Percentage of correct guesses.

Precision/Recall: Crucial for things like medical diagnoses where a "false negative" is dangerous.

Mean Squared Error (MSE): Used for regression to see how far off your predictions are.

3. Implementation Tools

You don't need to write algorithms from scratch. The ecosystem is very mature:

Category

Tools

Languages

Python (Standard), R (Statistical focus), Julia

Libraries

Scikit-learn (General ML), Pandas (Data manipulation), NumPy

Deep Learning

TensorFlow, PyTorch

Cloud Services

AWS SageMaker, Google Vertex AI, Azure ML

4. Deployment and Monitoring

A model on your laptop is a hobby; a model in an API is a product.

Export: Save your model (using formats like Pickle or ONNX).

API: Wrap the model in a framework like Flask or FastAPI so other apps can send it data and get predictions.

Monitoring: Models "drift" over time as real-world data changes. You’ll need to retrain periodically.